“I believe that at the end of the century the use of words and general educated opinion will have altered so much that one will be able to speak of machines thinking without expecting to be contradicted.” – Alan Turing

After spending an afternoon with Teradeep’s May 2015 Neural Network Demo, I feel both intrigued and motivated to experience machine learning and Artificial Intelligence at a great depth.

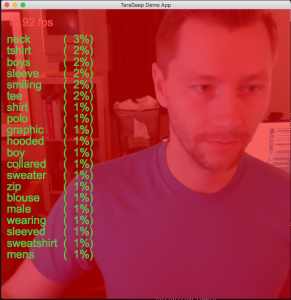

Observations made during tinkering with their demo-app:

- You can’t modify the app to use widescreen video. The network expects a particular aspect ratio.

- The fps hovers around 11 on my Macbook Pro.

- Most of the time, the network seems to “lack confidence.” As in, the percentage is often low on what category is in the frame. This could just be a byproduct of the UI/UX clashing with numbers that make more sense if you know what the algorithm means.

- Interpreting the data output is difficult, only because I’m human. An unexpected irony, probably because of how much of a novice I am in the world of AI and image processing.

- Some of the settings are fun to play with. You can change the number of frames that the network will use to detect objects, change the number of terms being seen on the screen , etc. I didn’t really see any major benefit to modifying the settings, though, and I assume that they tuned the AI to behave at its very best for the default demo.

Overall, this is a pretty amazing tool. I’d really like to dig into how the actual neural network model works. For example, some questions that I have:

- What gives “confidence?” I understand that the image is compared to the large database of potential matches… Perhaps my question is this: How exactly does the algorithm apply that database to the provided frame?

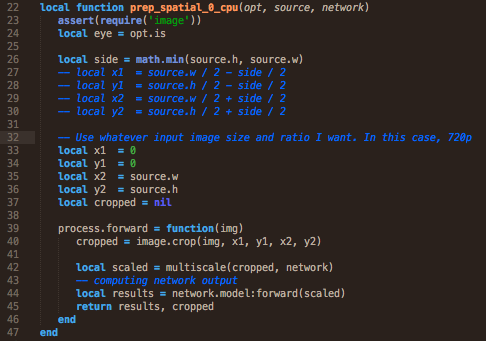

- How difficult would it be to outline the shape of a person or object? I am new to Lua and C, but it looks like the image goes into the model, then comes back out with the image and then extra data.

- How can I improve the performance of the model? What kind of specs would it take to reach 30fps on a full 1280×720 resolution (as opposed to the cropped 1:1 ratio this demo uses).

- If the network could analyze 4k video, how much more precise could the confidence of any particular object in the frame be?

- At what point in time does frame detail provide diminishing returns?

- How exactly is the processing speed of the neural network affected by the resolution of the image being fed to it?

- Why can I only use one processing stream on my 2013 Macbook Pro?

It would be fun to build a Terminator-like video output, in which there was a much more “fun” UX/UI for the human to interpret what the neural network “sees.” Obviously, the computer doesn’t care what it looks like. At least, in the current generation of AI. Down the road, AI may develop a taste for the aesthetic, I suppose:

This sort of specialized video rendering is already being done by the team at Teradeep, but the future applications are obvious… And, perhaps it goes without saying, ominous.

This leads me to a second set of questions with, perhaps, obvious answers:

1. If I were to train the neural network to recognize shapes of common weapon systems, what would be the “lowest-hanging-fruit” within the defense industry for this application? Could you slap this AI onto a drone to provide the pilot a more accurate understanding of the drone’s environment?

2. Could you train the neural network to understand any type of video feed? For example, if I build a “mini-tank” that has a thermal camera, infrared camera, and a standard camera, could I combine the three feeds to improve the confidence of the network’s output?

Curiosity abounds when engaging in what almost feels like the “dark arts” of the modern day… Namely, the vision of future synthetic intelligence combined with modern defense capabilities.

I’ll be writing more about this as I continue to tinker and test. Perhaps there is less-opaque, open source neural model I can find out there…

Thank you for trying this out! And for voicing out your experience!

Most of your first set of question can be answered with a “Yes we can”, and we are working on it as we speak. Localization of objects, better confidence and accuracy (always improving in our labs!), larger images: all things we have here working and we will release soon as advanced demonstrations. Please stay tuned!

Hey, Eugenio! It was my pleasure, truly! Thank you to both you and your team. Looking forward to future updates.